Build Lab

Every EU AI Act compliance question you ask an AI today comes back with the same three sins: it cites the wrong article, it confuses the provider obligations with deployer obligations, and it hallucinates a Recital that doesn't exist. The output is confident. It is also wrong. And when your auditor catches it, the AI has already moved on to the next question while you are rebuilding the paper trail.

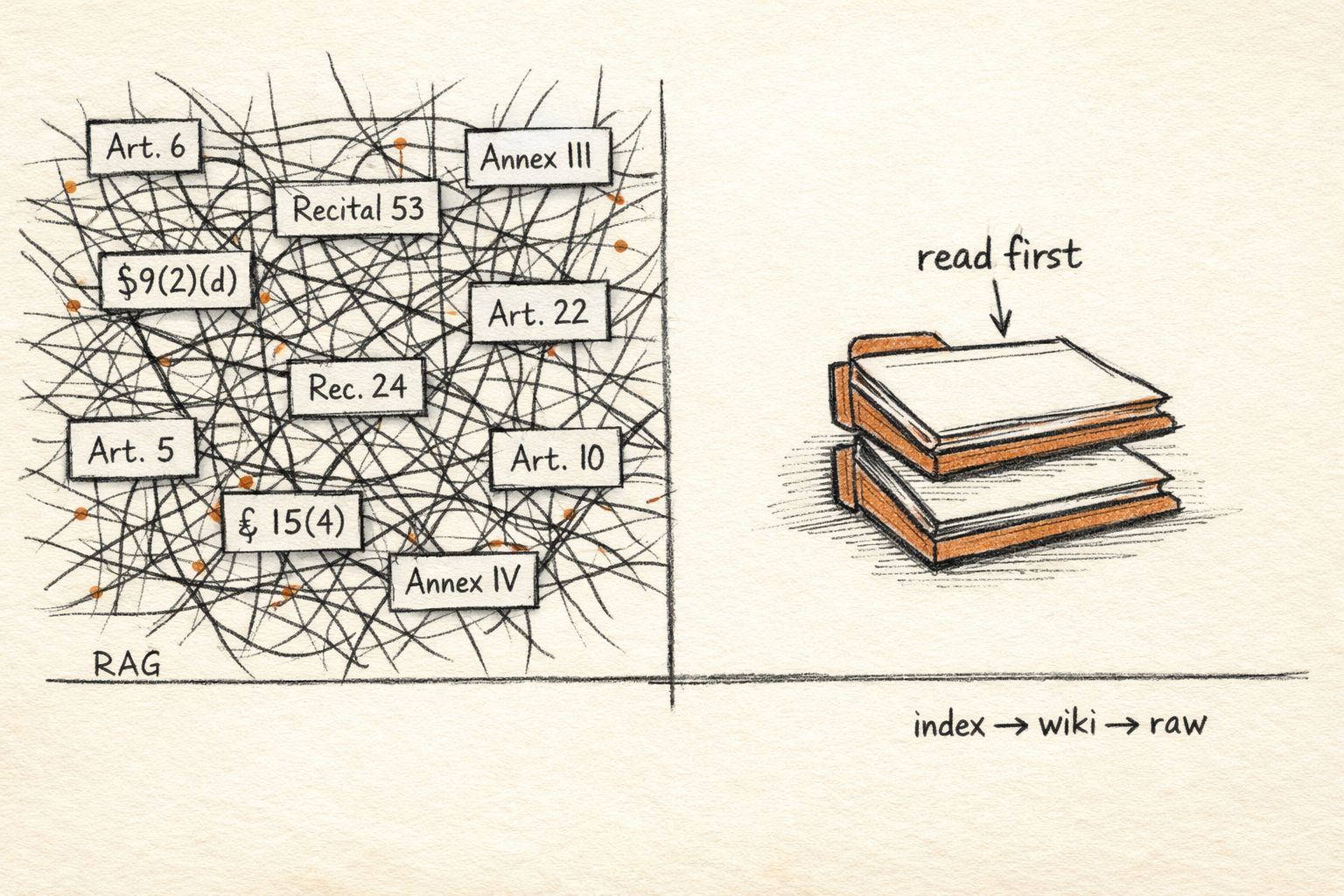

The reflex fix is RAG — embed the regulation, chunk it, retrieve on query. It helps. It doesn't solve the problem. Chunks lose context. Articles cross-reference each other. Recitals interpret Articles, and Articles interpret Annexes, and the dependency graph doesn't survive a naive cosine-similarity search.

Andrej Karpathy, came up with a better pattern, and it uses exactly three folders.

Thesis

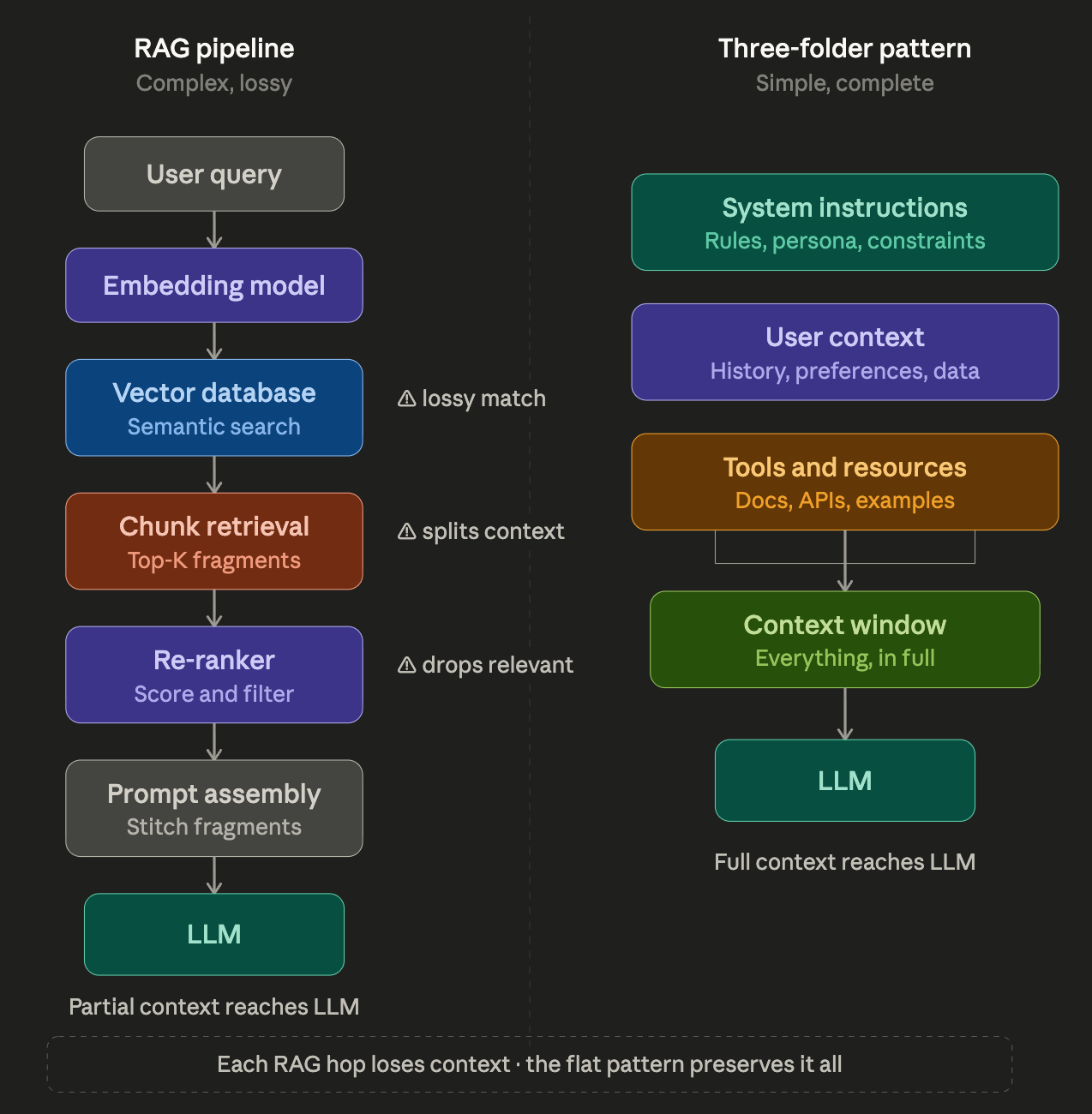

Karpathy's architecture for LLM-powered knowledge bases is three directories and one index file:

raw/— your source material, unmodified. PDFs, scraped HTML, official regulation texts.wiki/— LLM-compiled summary articles, one per concept, maintained incrementally.index.md— a master map, sized to fit your model's context window, that the LLM reads first to decide which wiki articles to load.

No embeddings. No vector database. No chunking heuristics. The LLM does the retrieval by reading the index and pulling the relevant wiki article into context.

For a domain as structured as EU regulation — where concepts have canonical names, articles have numbers, and the corpus changes slowly — this architecture is dramatically better than RAG. Here's how to build one for the AI Act in a weekend.

Architecture Overview

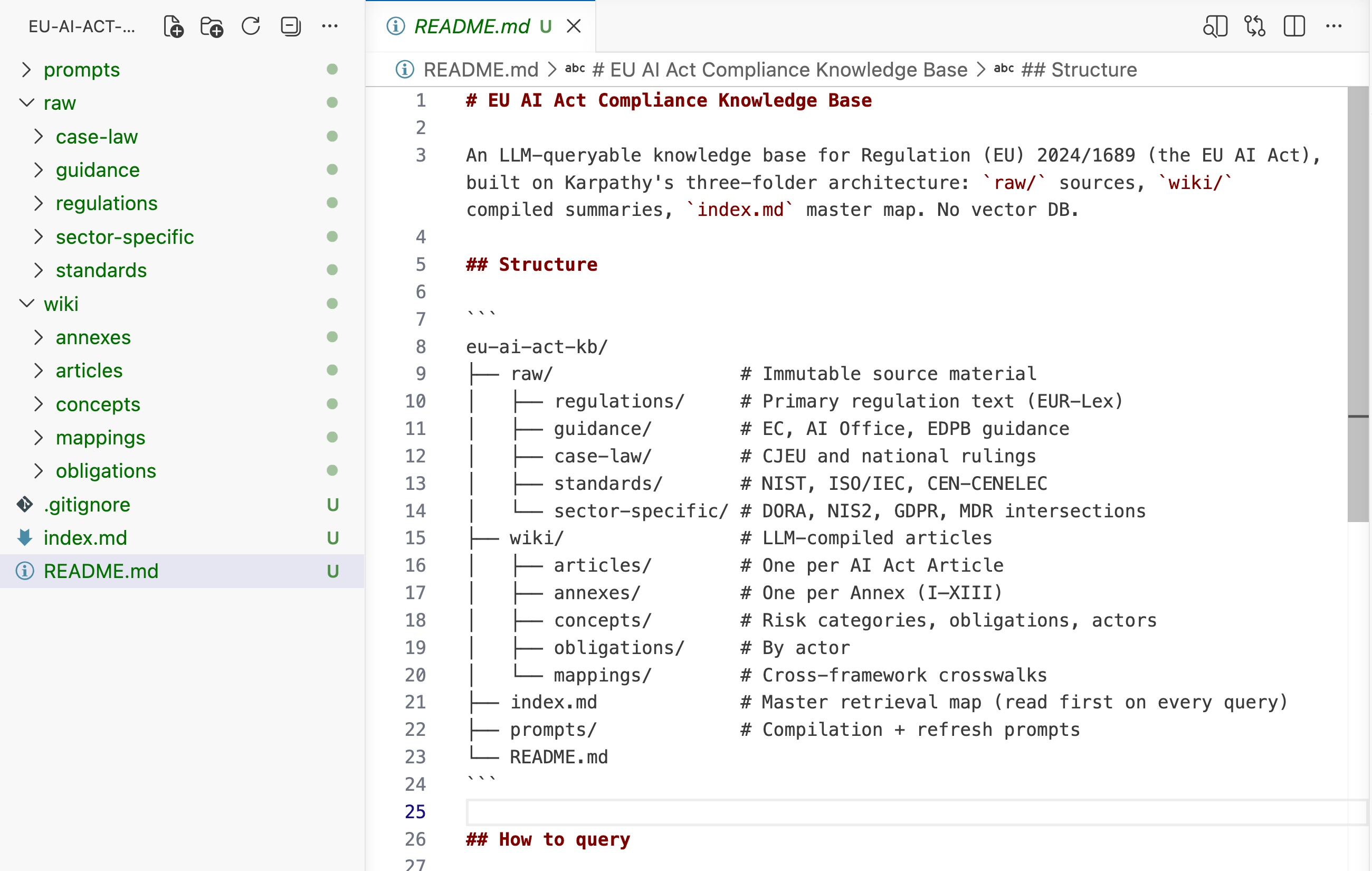

eu-ai-act-kb/

├── raw/

│ ├── regulations/ # Primary sources, unmodified

│ ├── guidance/ # EC + AI Office + EDPB guidance

│ ├── case-law/ # CJEU + national rulings

│ ├── standards/ # NIST, ISO/IEC, CEN-CENELEC

│ └── sector-specific/ # DORA, NIS2, GDPR, MDR intersections

├── wiki/

│ ├── articles/ # One per AI Act Article

│ ├── annexes/ # One per Annex (I–XIII)

│ ├── concepts/ # Risk categories, obligations, actors

│ ├── obligations/ # By actor (provider, deployer, GPAI, importer)

│ └── mappings/ # AI Act ↔ NIST, ↔ GDPR, ↔ DORA

├── index.md # Master map, ~3K tokens

├── prompts/ # The prompts you use to compile wikis

└── README.md # What this is + how to query it

The complete scaffold. Clone/Download the starter repo (link at the end) to skip to Step 2.

Each layer has one job:

Layer | Job | What lives here |

|---|---|---|

| Source of truth | Original regulation text, guidance PDFs, court rulings — never edited |

| Working memory | Compiled articles that the LLM reads at query time |

| Retrieval map | The only file read on every query |

| Repeatable compilation | Standard prompts for wiki creation + refresh |

The separation matters. raw/ is immutable history. wiki/ is versioned interpretation. When the AI Act gets amended (it will — the AI Office is already drafting secondary legislation), you update raw/, re-run the compilation prompt, and wiki/ rebuilds without touching the rest of your pipeline.

Built for builders. Not buzzwords. San José 2026

500+ speakers. 18 content tracks. Workshops, masterclasses, and the people actually shipping the tools you use every day. WeAreDevelopers World Congress — September 23–25. Use code GITPUSH26 for 10% off.

Build Steps

Step 1 — Scaffold the Repo

mkdir eu-ai-act-kb && cd eu-ai-act-kb

git init

mkdir -p raw/{regulations,guidance,case-law,standards,sector-specific}

mkdir -p wiki/{articles,annexes,concepts,obligations,mappings}

mkdir prompts

touch index.md README.md

Add a .gitignore:

.DS_Store

*.pdf.tmp

.cache/

Commit the empty scaffold. You want the first real change to be a clean diff.

Step 2 — Ingest the Primary Regulation

Download the consolidated text of Regulation (EU) 2024/1689 from EUR-Lex. You want three formats:

HTML consolidated — best for grep-ability

PDF — your citation source

Annexes separately — they change independently

cd raw/regulations

mkdir eu-ai-act-2024-1689

cd eu-ai-act-2024-1689

# Consolidated text (HTML → clean markdown)

curl -o consolidated.html "https://eur-lex.europa.eu/legal-content/EN/TXT/HTML/?uri=CELEX:32024R1689"

# PDF for citations

curl -o regulation.pdf "https://eur-lex.europa.eu/legal-content/EN/TXT/PDF/?uri=CELEX:32024R1689"

# Convert HTML to markdown (pandoc or claude-code itself)

pandoc consolidated.html -t gfm -o consolidated.md

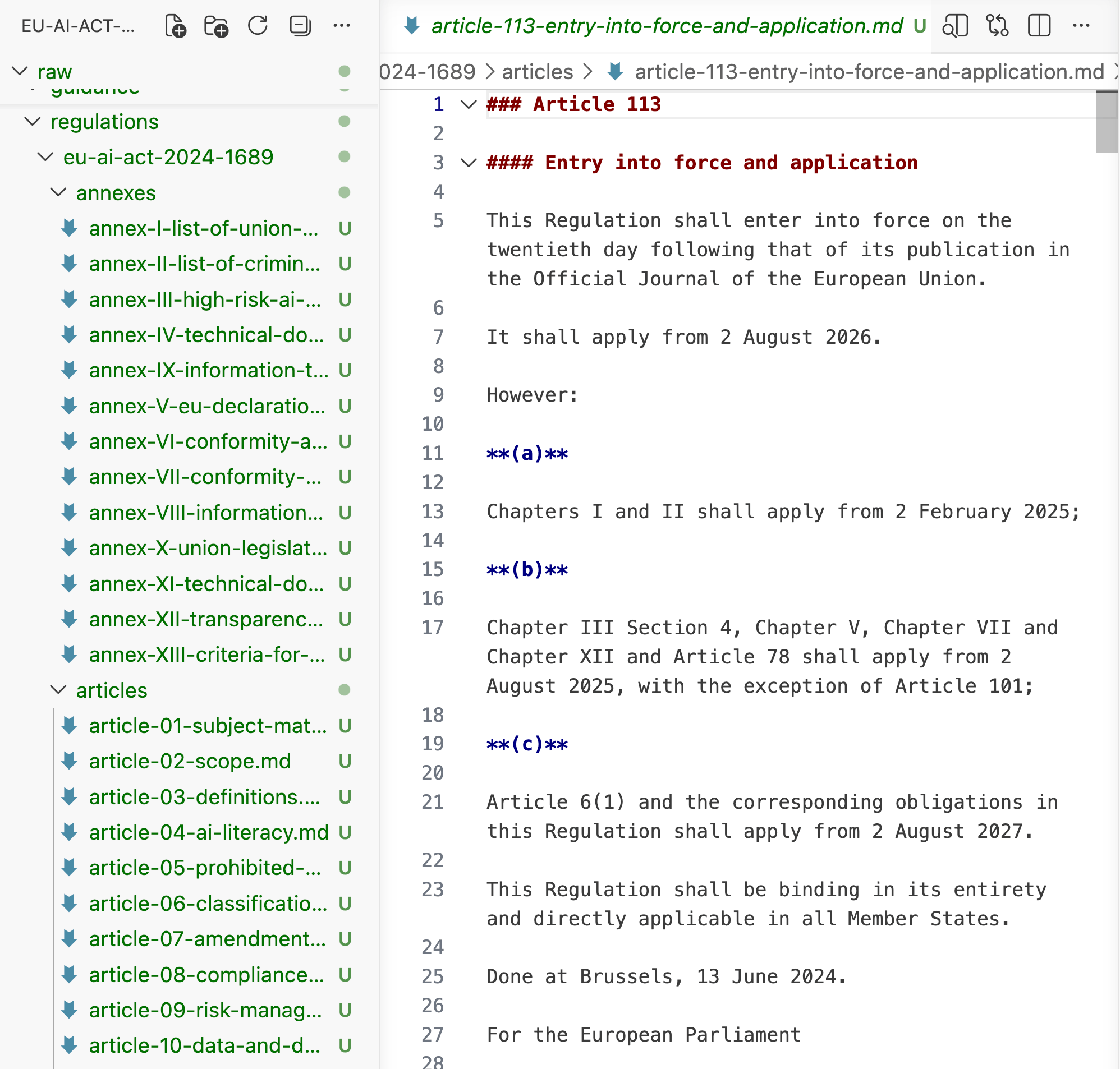

Then split into per-article files. This matters because during compilation you want to feed single articles to the LLM, not the whole regulation.

raw/regulations/eu-ai-act-2024-1689/

├── regulation.pdf

├── consolidated.md

├── recitals.md

├── articles/

│ ├── article-01-subject-matter.md

│ ├── article-02-scope.md

│ ├── article-03-definitions.md

│ ├── article-06-classification-rules.md # ← anchor

│ ├── article-09-risk-management-system.md # ← anchor

│ ├── article-55-obligations-gpai-systemic.md # ← anchor

│ └── ...

└── annexes/

├── annex-I-union-harmonisation-legislation.md

├── annex-III-high-risk-use-cases.md # ← anchor

└── ...

Splitting can be done by LLM — feed consolidated.md to Claude with a prompt like:

Split this EU AI Act regulation text into one markdown file per Article and

per Annex. Preserve article numbers in filenames as article-NN-kebab-case-title.md.

Keep all citations intact. Do not summarize.

Each Article is its own file. That's what compilation prompts will feed into.

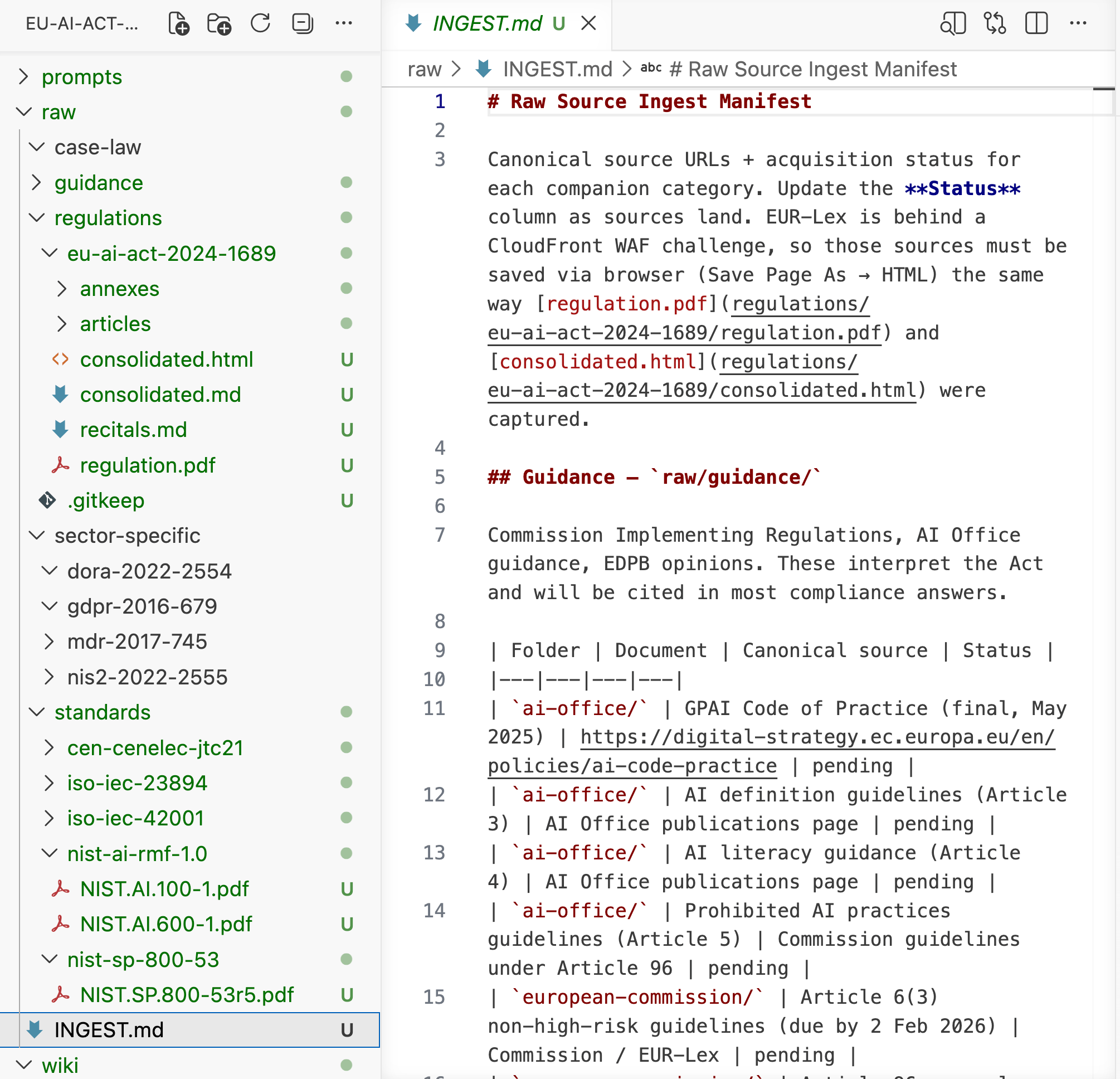

Step 3 — Ingest Companions (Guidance, Standards, Sector-Specific)

Order matters. Build out from the Act:

raw/guidance/— Commission Implementing Regulations, AI Office guidance, EDPB opinions on AI. These interpret the Act; you will need them cited in 80% of compliance questions.raw/standards/— NIST AI RMF 1.0, NIST SP 800-53 overlays (the new agentic AI overlays NIST is drafting), ISO/IEC 42001, ISO/IEC 23894, CEN-CENELEC JTC 21 deliverables.raw/sector-specific/— DORA (for financial services AI), NIS2 (cybersecurity intersection), GDPR (data governance), MDR (medical device AI). Ingest only what applies to your client base.raw/case-law/— start empty. Add CJEU rulings as they accumulate. For now, key GDPR cases that inform AI Act interpretation (Schrems II, Meta Platforms Ireland).

For each companion document, same principle: raw, unmodified, split into retrievable chunks only where the source is structured (articles, sections). Leave continuous prose alone.

After Step 3 you have roughly 200–400 files in raw/. None of them are read at query time — they feed the compilation prompts.

Step 4 — Write the Compilation Prompts

Create prompts/compile-article-wiki.md. This is the prompt Claude runs to turn a raw Article file into a wiki article.

# Compile Article Wiki

You are compiling a wiki article for the EU AI Act knowledge base.

Your reader is an enterprise compliance officer or AI governance lead.

INPUT: A raw AI Act Article file from raw/regulations/eu-ai-act-2024-1689/articles/

OUTPUT: A wiki/articles/article-NN-title.md file with this structure:

---

article: NN

title: <canonical title from regulation>

actors: [provider|deployer|importer|distributor|gpai-provider|authorised-representative]

risk_tier: [prohibited|high-risk|limited-risk|minimal-risk|gpai|gpai-systemic|n/a]

cross_references:

articles: [list of referenced Article numbers]

annexes: [list of referenced Annex numbers]

recitals: [list of referenced Recital numbers]

related_standards: [NIST AI RMF sections, ISO 42001 clauses]

status: [draft|reviewed|stale]

last_reviewed: YYYY-MM-DD

---

# Article NN: <Title>

## Plain summary

<3–5 sentences. What does this Article actually require, in operational terms?>

## Who it applies to

<Exact actor list. Reference Article 3 definitions if needed.>

## What they must do

<Numbered list of concrete obligations. Cite paragraph numbers.>

## What triggers the obligation

<The conditions that make this Article binding. Temporal, scope, classification.>

## Common misinterpretations

<2–3 bullet points on how this Article is typically misread.>

## Cross-references

<Links to related wiki articles: [[article-09]], [[annex-III]], [[concept-high-risk]]>

## Source

- Raw: raw/regulations/eu-ai-act-2024-1689/articles/article-NN-*.md

- Primary PDF: raw/regulations/eu-ai-act-2024-1689/regulation.pdf (page X–Y)

- Last EUR-Lex check: YYYY-MM-DD

## Open questions

<What is ambiguous in the text? What is the AI Office expected to clarify?>

Create similar prompts:

prompts/compile-annex-wiki.mdprompts/compile-concept-wiki.mdprompts/compile-obligation-wiki.mdprompts/compile-mapping-wiki.md(AI Act ↔ NIST, ↔ GDPR)prompts/refresh-wiki.md(re-run whenraw/changes)prompts/generate-index.md

Why prompts live in the repo: compilation must be deterministic and reviewable. When a wiki article gets a factual correction, you fix the prompt AND the article, and both ship in the same commit.

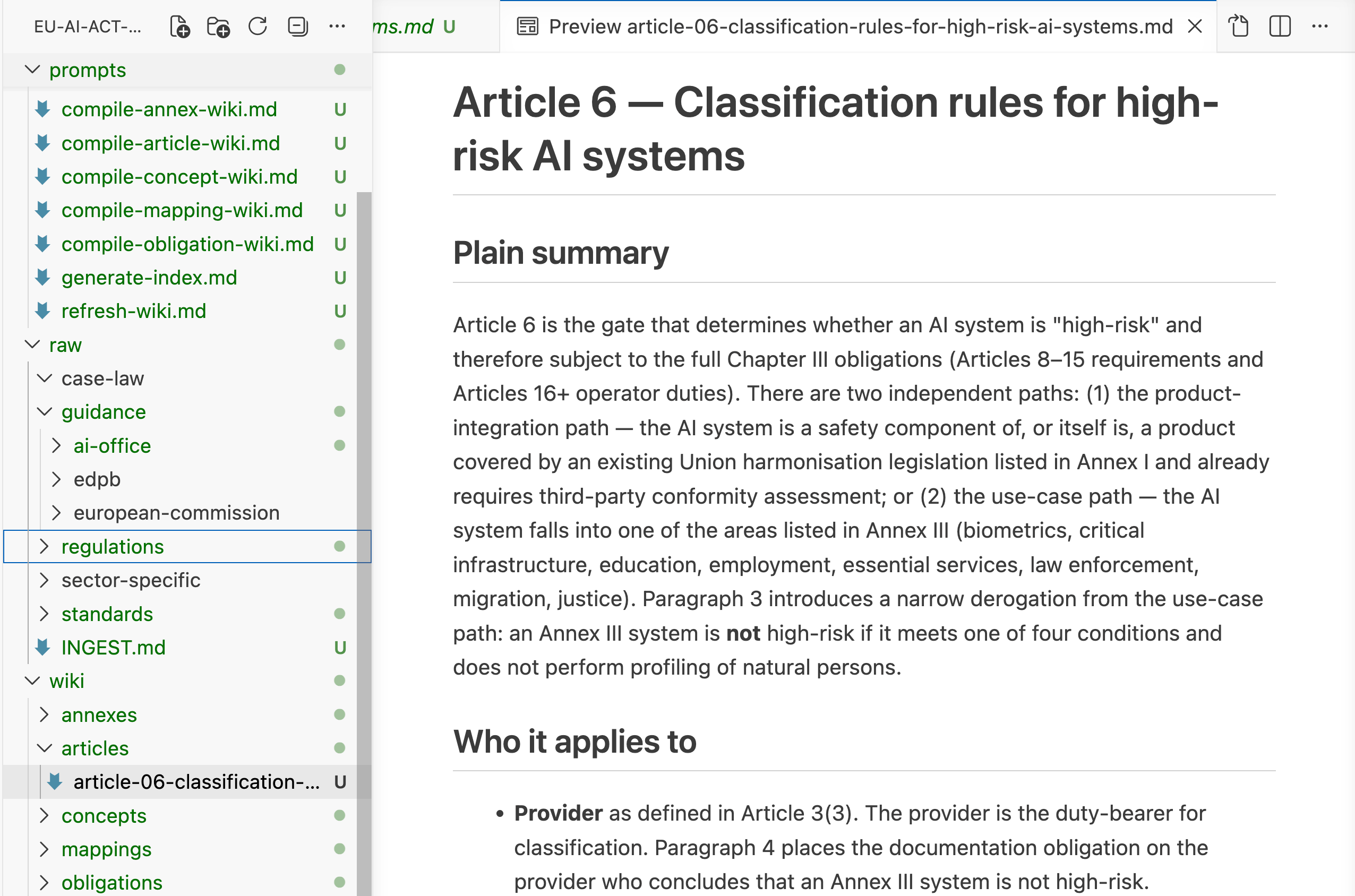

Step 5 — Compile the Five Anchor Articles First

Don't compile all 113 Articles. Compile five. These five cover ~80% of the compliance questions you'll get:

Article 6 — Classification rules for high-risk AI systems

Article 9 — Risk management system

Article 10 — Data and data governance

Article 14 — Human oversight

Article 55 — Obligations for providers of GPAI models with systemic risk

Run prompts/compile-article-wiki.md against each, one at a time. Review the output. Correct the output. Commit.

The anchor article. Frontmatter drives the index; the body is what the LLM loads at query time.

Same pattern for anchor Annexes (I, III, IV) and concepts (high-risk, gpai, prohibited-practices, substantial-modification).

Target end-of-Step-5 state: 15–20 wiki articles total. That's enough to answer most classification and obligation questions.

Step 6 — Generate index.md

The index is the only file the LLM reads on every query. It must fit in your model's context window with room to spare (target ≤ 3K tokens for Claude, ≤ 2K for smaller models).

Run prompts/generate-index.md against the wiki/ directory. The output looks like:

# EU AI Act Knowledge Base — Index

Last generated: 2026-04-17

Coverage: 20 articles, 5 annexes, 12 concepts, 8 obligations, 4 mappings

## By Article

- [[wiki/articles/article-05]] — Prohibited AI practices

- [[wiki/articles/article-06]] — Classification rules for high-risk systems

- [[wiki/articles/article-09]] — Risk management system (high-risk)

- [[wiki/articles/article-10]] — Data and data governance (high-risk)

- [[wiki/articles/article-14]] — Human oversight (high-risk)

- [[wiki/articles/article-15]] — Accuracy, robustness, cybersecurity (high-risk)

- [[wiki/articles/article-55]] — Obligations for GPAI providers with systemic risk

## By Actor

- **Provider** obligations: [[article-16]], [[article-17]], [[article-18]], [[article-19]]

- **Deployer** obligations: [[article-26]], [[article-27]]

- **GPAI provider** obligations: [[article-53]], [[article-54]], [[article-55]]

## By Risk Tier

- Prohibited: [[article-05]], [[annex-I]]

- High-risk: [[article-06]], [[annex-III]], [[article-09]] through [[article-15]]

- GPAI: [[article-51]], [[article-52]], [[article-53]]

- GPAI with systemic risk: [[article-51]] §2, [[article-55]]

## By Compliance Control

- Risk management: [[article-09]], [[obligation-risk-management]]

- Data governance: [[article-10]], [[obligation-data-governance]]

- Human oversight: [[article-14]], [[obligation-human-oversight]]

- Transparency: [[article-13]], [[article-50]], [[obligation-transparency]]

- Cybersecurity: [[article-15]], [[article-55]]

## Cross-framework Mappings

- [[mappings/ai-act-to-nist-rmf]] — Article-by-article mapping to NIST AI RMF 1.0

- [[mappings/ai-act-to-iso-42001]] — Mapping to ISO/IEC 42001:2023 AIMS

- [[mappings/ai-act-to-gdpr]] — Data governance overlap

- [[mappings/ai-act-to-dora]] — Financial sector overlap

## Recent Changes

- 2026-04-10: Updated [[article-55]] after AI Office clarification circular (raw/guidance/ai-office-2026-circular-03.pdf)

- 2026-04-03: Added [[mappings/ai-act-to-nist-rmf]]

- 2026-03-28: Added [[annex-III]] deep compile

[SCREENSHOT 7: index.md rendered in Obsidian with the graph view on the right side, showing wiki article relationships. Caption: "The index is a map, not a list. Claude reads this first and decides which 2–4 wiki files to load."]

Step 7 — Connect the Query Layer

Two viable paths. Pick one.

Path A: Claude Desktop + Filesystem MCP (simplest, recommended)

Install the filesystem MCP server and point it at your eu-ai-act-kb/ directory. Claude Desktop now has read access to every file.

Add a system prompt to a Claude Project:

You are an EU AI Act compliance assistant. You have access to a knowledge base at

/path/to/eu-ai-act-kb/.

For every compliance question:

1. Read index.md FIRST.

2. Identify which wiki articles are relevant. Read 2–4 of them.

3. Answer the question citing the wiki article and the underlying raw source.

4. If the question requires raw source detail, read from raw/ after wiki.

5. Never answer without citing specific wiki article(s).

6. If the relevant wiki article is missing, say so explicitly and propose what

raw/ source would need to be compiled.

Path B: Custom MCP server (better for n8n pipelines)

Build a small MCP server with tools:

query_index()→ returns the indexread_wiki(article_id)→ returns a wiki fileread_raw(path)→ returns a raw sourcelist_gaps()→ identifies raw sources without wiki compilation

Wire it into the same Claude Project. Same result, but now your n8n automation flows can query the KB programmatically.

Step 8 — Automate the Refresh

The regulation doesn't change often. The guidance changes weekly. Build one n8n workflow:

Every Monday 06:00 CET:

1. Fetch EUR-Lex RSS for CELEX:32024R1689 and related numbers

2. Check AI Office news page for new circulars

3. Check EDPB opinions page

4. If any delta detected:

a. Pull new source into raw/<category>/

b. Open a draft PR to the kb repo

c. Post to Gotify: "KB update available: <source>"

d. Flag affected wiki articles (via raw → wiki dependency map)

5. reviews PR → merge → trigger refresh-wiki.md prompt for flagged articles

6. Re-generate index.md

7. Commit, push

Same workflow mirrors wiki/ into your Obsidian vault (Thalos module). You get a human-readable, graph-explorable version for reading; the LLM uses the markdown directly.

Global HR shouldn't require five tools per country

Your company going global shouldn’t mean endless headaches. Deel’s free guide shows you how to unify payroll, onboarding, and compliance across every country you operate in. No more juggling separate systems for the US, Europe, and APAC. No more Slack messages filling gaps. Just one consolidated approach that scales.

Query Patterns (Worked Examples)

Pattern 1 — Classification

Question: "We deploy a CV model for pre-screening job applicants in France. Classification under Article 6?"

The LLM reads index.md, identifies article-06 and annex-III as relevant, loads both wiki files. Returns:

This system falls under high-risk per Article 6(2) because it matches Annex III, point 4(a): AI systems intended to be used for the recruitment or selection of natural persons, in particular to place targeted job advertisements, to analyse and filter job applications, and to evaluate candidates.

You are a deployer under Article 3(4). Your obligations are in Article 26. The provider of the CV model has obligations in Articles 16 and 9–15. Cross-reference [[wiki/obligations/deployer-high-risk]] for your specific compliance checklist.

Sources: wiki/articles/article-06.md · wiki/annexes/annex-III.md · raw: raw/regulations/eu-ai-act-2024-1689/articles/article-06-classification-rules.md (paragraphs 2–4)

Pattern 2 — Obligation gap analysis

Question: "We're a GPAI provider. Systemic risk threshold is 10^25 FLOPs of compute. Our model is at 5×10^24. What do we owe?"

LLM reads index.md, loads article-51 and article-53 (GPAI obligations, not systemic). Returns the filtered obligation list + the trigger conditions that would move the model into systemic risk tier. Cites the wiki + raw.

Pattern 3 — Cross-framework mapping

Question: "Our NIST AI RMF 1.0 controls are at 'Govern 1.2' maturity. Which AI Act Articles does that satisfy?"

LLM reads index.md, loads mappings/ai-act-to-nist-rmf. Returns the mapping table with a concrete answer: "Govern 1.2 contributes to compliance with Article 9(1)–(3), partially with Article 14, does not address Article 10." With cited sources.

What's in the Bundle (Downloadable)

onabout-ai-eu-ai-act-kb-starter.zip contains:

/scaffold/ — Empty directory structure (Step 1)

/prompts/ — All 7 compilation prompts (Step 4)

/anchor-wikis/ — 5 pre-compiled wiki articles for Articles 6, 9, 10, 14, 55

/example-index.md — Template index ready to customize

/workflow.json — n8n workflow export for refresh automation (Step 8)

/README.md — Setup guide + troubleshooting

Next Steps

You have the scaffold. Clone it, fork it, break it. If you ship something with it, reply — I read every response and the best ones end up in future editions.

Want to read more?

Part 3 — The Memory Layer: MemPalace applied to client engagement history. The KB remembers not just the regulation but every compliance conversation you've had with every client. Build Lab, May.

AI Act ↔ GDPR: the mapping every Article 10 answer needs: full walkthrough of the data-governance overlap, with the exact cross-references that survive an EDPB review. Regular edition, 2026-04-24.

FRIA in 90 minutes: how to run a Fundamental Rights Impact Assessment for a deployer using only the KB and one compilation prompt. Build Lab mini, May.

Monitoring six regulators at once: extending the n8n workflow to DORA, NIS2, MDR, and the Cyber Resilience Act without drowning in alerts. Regular edition, May.

RAG vs. the three-folder pattern, a decision tree: when each wins, how to migrate a RAG system that has already hallucinated past an auditor. Regular edition, June.

The MCP server every KB needs: building a custom

query_index/read_wiki/list_gaps/citeserver that plugs into Claude Desktop and n8n at the same time. Build Lab, June.

That’s it for this week.

That's it for this special edition.

This Friday drop had a three-folder architecture that beats RAG on a structured corpus, Regulation (EU) 2024/1689 split into 113 per-Article files you can feed to an LLM individually, an n8n workflow that catches regulatory deltas on Monday morning before your auditor's coffee is cold, and a starter kit small enough to clone before Part 3 lands. The through-line is the same as every edition of OnAbout.AI: governance is infrastructure now. The organisations that build it in 2026 will be selling it in 2028.

Back to the regular Thursday cadence next week,

João

OnAbout.AI delivers strategic AI analysis to enterprise technology leaders. European governance lens. Vendor-agnostic. Actionable.

If this landed in your inbox from a forward — subscribe here to get the full picture every week.